Scientists Are Trying to See Our Dreams

Who can capture dreams? Researchers try, making videos from brain scans and dreamscapes from machine learning.

Do androids, as sci-fi novelist Philip K. Dick asked, really dream of electric sheep? The purpose and meaning of dreams have long been debated. Now scientists are getting closer to deciphering what humans see as they sleep—and how a robot can simulate it.

In 2013 neuroscientist Yukiyasu Kamitani had test subjects take hundreds of brief naps in an MRI machine, repeatedly waking them so they could describe their dreams. Kamitani had already isolated the unique brain patterns for certain objects he’d shown subjects while awake. Their brains were scanned for those patterns as they napped, and a computer program automatically turned the basic contents of their dreams into short videos. The study found these were 70 percent accurate compared with what subjects remembered of their real dreams.

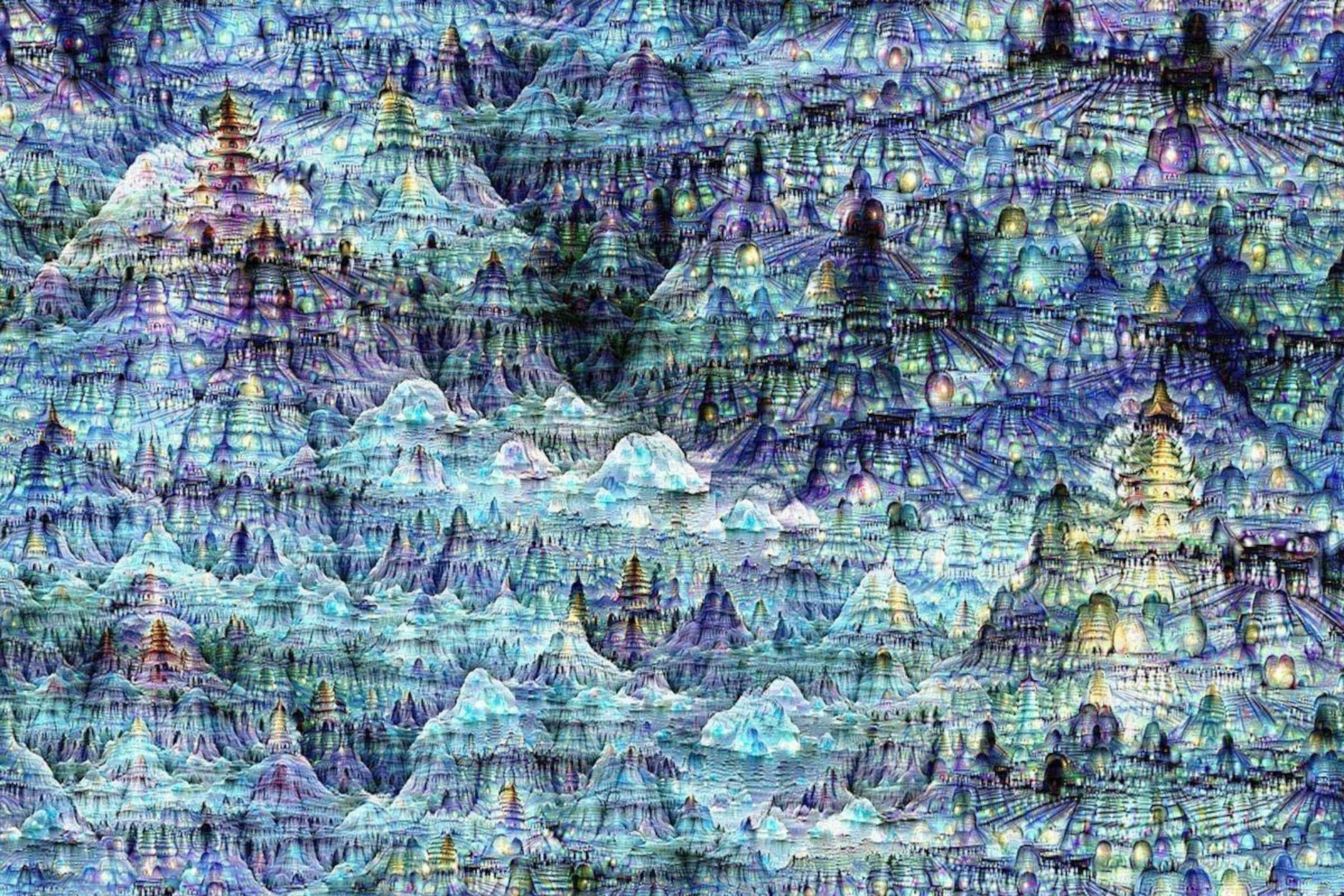

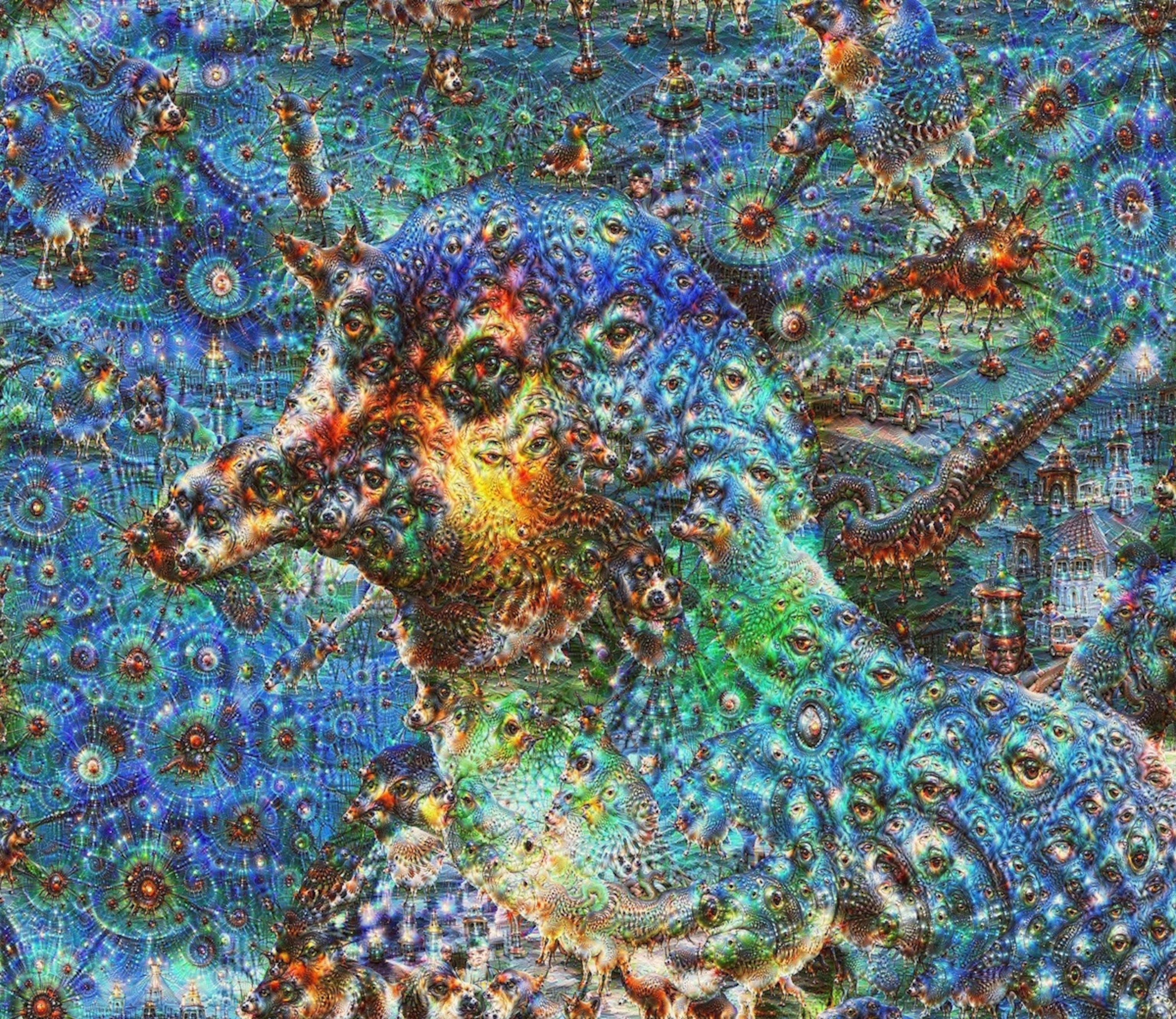

Two years later Google engineers also captured the dreamlike images of a computer. They fed millions of images into a brain-inspired computer algorithm—a network of artificial neurons—to study how it learned to identify objects. Then they put it through DeepDream, a program that enables the network to build its own algorithm-fueled dreamscape by finding shapes in an image of random visual noise, like the static on an old TV. The computer generated a psychedelic scene from its machine-learned knowledge. As in a dream, previously seen images were reconfigured into new patterns.

It won’t be possible to produce a precise recording of human dreams until scientists discover how dreams originate in the brain, says Jack Gallant, a professor of psychology at UC Berkeley—or they build an encyclopedia of brain activity that corresponds to every thought. He likens it to building a language translation program: “You have a language but nothing it references to.”