Language evolution witnessed in lab experiments

Simon Kirby and Hannah Cornish are watching evolution take place within the confines of their laboratory. But they are studying neither bodies nor genes; their interest lies in languages, and how they change over time.

Regardless of school lessons and textbooks, most of the features of the languages we speak are learned by listening to the words of native speakers. Their sentences convey their thoughts, but they also hint at the structure of the language they are spoken in. That allows people who are learning a new language to infer something about its structure by listening to the way its sentences are put together.

In the past, computer models have shown that behaviours like these, which are passed on through repeated cycles of observation and learning, eventually evolve to become easier to learn. But these simplified models are fairly removed from realistic learning. In his book on language evolution, Derek Bickerton described these models as a “case of looking for your car-keys where the street-lamps are.” The big question is whether real languages have also evolved in this way?

What’s really needed are experiments that test the adaptations that languages pick up over time, using the brains of living people rather than the software of computers. And that’s exactly what Kirby and Cornish have done. Together with Kenny Smith at Northumbria University, they have provided the first experimental evidence that as languages are passed on, they evolve structures that make them easier to transmit effectively.

The team tracked the progress of artificial languages as they passed down a chain of volunteers. They found that in just ten iterations, the made-up tongues had become more structured and easier to learn. What’s more, these adaptive features arose without specific plans or designs on the part of the speakers. The appearance of design without the guiding will of any designer is another trait that offers compelling parallels to biological evolution.

Chinese whispers

The trio asked a group of 80 volunteers to learn an “alien language”, that described different visuals. The visuals were 27 combinations of shapes (square, circle or triangle) that had a specific colour (red, black or blue) and moved in a specific way (horizontal, bouncing or spiralling). Each of these was attached to a randomly made-up word of 2-4 syllables.

After training, the volunteers were shown all the images and asked to say what word the aliens would give to describe each one, with the catch that they had only been trained on about half of the pairs. The first volunteer’s responses were used to train and test the second, their answers were used on the third and so on.

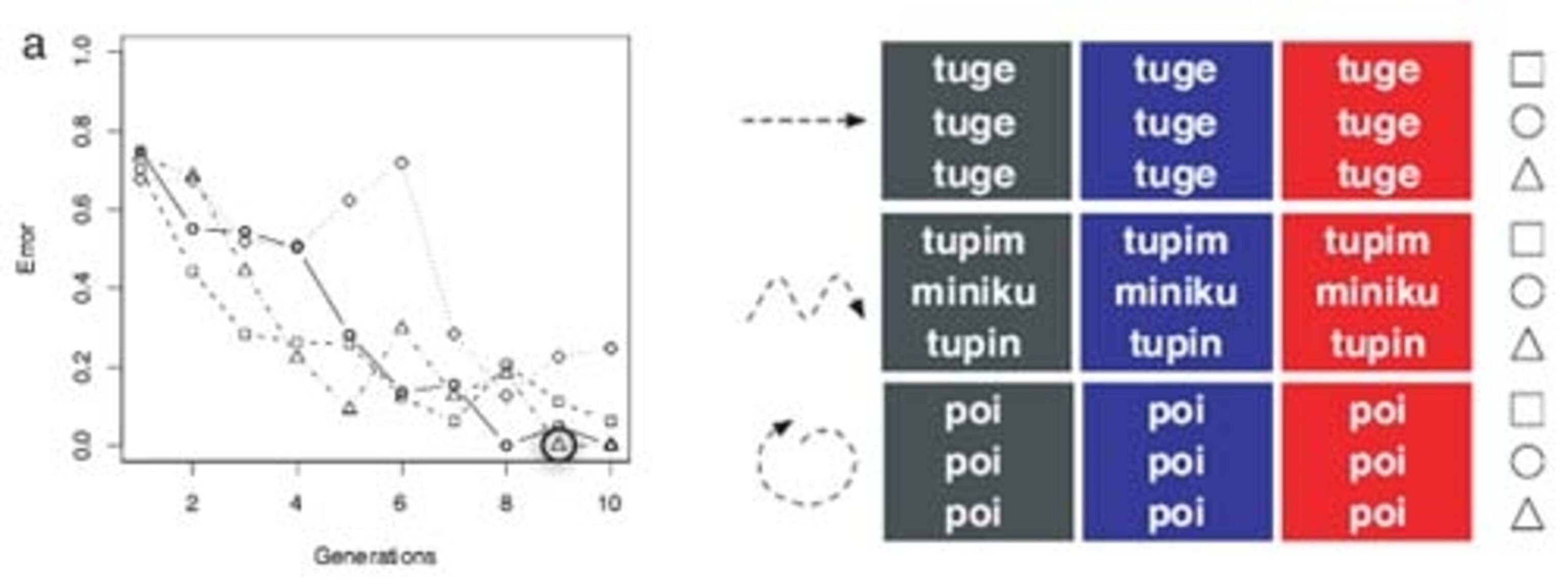

This was done for four separate chains using different starting words. In each one, the languages had clearly become easier to learn by the 10th round, with the later learners making fewer and fewer mistakes than those before them. A couple of the languages were even transmitted perfectly by the end; people came up with exactly the same set of 27 words as their predecessors, even though they hadn’t seen half of the word-image pairs before.

The languages also became much more structured over time. At the start, every image had its own unique word that could only be learned by rote, as nothing in the words themselves gave any clue to the meanings they conveyed. But by the end, single words were reused to express more than one meaning and the total number of words had plummeted to a mere handful from the original count of 27.

At first glance, you might think that this shedding of words explains why the later participants made fewer mistake. But if the languages simplified in a random way, the volunteers still shouldn’t have been able to achieve perfect scores. For example, if the same word means a black, bouncing circle and a red, spiralling square, you couldn’t draw any general conclusions about its use, and you’d be back to learning by rote again. Clearly, that wasn’t possible for the volunteers, who were only trained on half of the word-image pairs.

The key to the perfect scores was the fact that the languages became simpler in systematic ways. For example, by the 4th round in one of the chains, the word tuge came to mean any horizontally moving object, regardless of shape or colour. By the 6th round, poi meant any bouncing object and at the end, the spiralling objects had three different words depending on their shape. It was precisely this structure that allowed people could deduce the words for objects they had never seen before with exacting precision.

Express yourself

A nice first result, but the ambiguity of the end products is a problem. Languages are tools for communication and no matter how easy one is to learn, it would be useless if it couldn’t put across different ideas clearly. In short, languages need to be expressive. But with one small tweak to their experiment, Kirby and Cornish showed that languages can do this while still evolving to be learnable and structured.

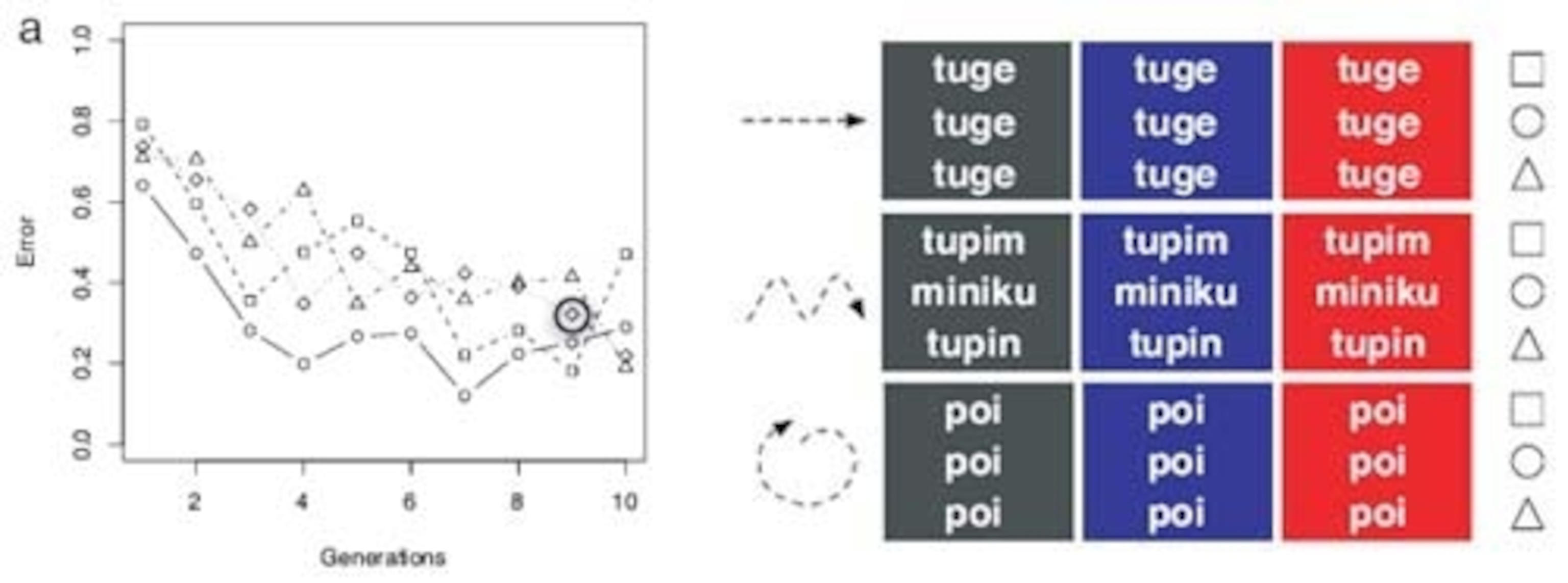

This time round, they filtered the pairs between rounds so that if multiple images were represented by the same word, all but one of them was removed. This meant that at every stage, each word could only have a single ‘meaning’ and with this change, the languages remained relatively wordy till the end. However, they still became easier to learn, with volunteers making fewer mistakes later on (although there were no perfect scores). And they still became increasingly structured but in a very different way.

This time, the structure lay in the way the words were put together. By the 6th round in every single chain, each word was made up of three parts that referred to the object’s shape, colour and movement. For example, in one chain, words that described blue shapes started with n, those for black shapes started with l and those for red shapes began with r. Likewise, words that ended in ki described horizontal movement, those ending in plo were for bouncing shapes and those ending in pilu meant spiralling. So for example, a red, bouncing circle might be rehoplo, while a blue, spiralling square would be nepilu.

Kirby and Cornish claim that the results of both experiments are relevant to the way that real languages work. In their first test, the artificial dialects became more structured as words took on a methodical collection of meanings. The same thing happens in real life. For example, the majority of nouns refer to multiple objects, while only proper nouns refer to individual things. All cats, for example, are known as cats, regardless of their shape, colour or movement.

In the second experiment, they became structured by ascribing specific meaning to components that can then be combined. Natural languages are rife with these hierarchies, at both the levels of words and sentences. In the experiment, they emerged spontaneously, but only because Kirby and Cornish deliberately removed multiple meanings between each round. That’s certainly an artificial step, but they argue that it reflects the pressures that real-world languages face – to be both expressive and easy to transmit.

In both cases, it was vitally important that from the volunteers’ perspective, they were doing the same task. They weren’t told that they were working from the outputs of other people who had gone before them, nor did they guess what was going on. They weren’t trying to improve on the language in any way; their goal was to reproduce it as accurately as they could and many of them didn’t even being realise that they were tested on images that they hadn’t seen during the training. As in the world of genes and species, “cultural transmission can lead to the appearance of design without a designer”.

Update: Jonah Lehrer at the Frontal Cortex chips in.

Reference: PNAS 10.1073/pnas.0707835105

Images: courtesy of PNAS; photo by me.