So Science Gets It Wrong. Then What?

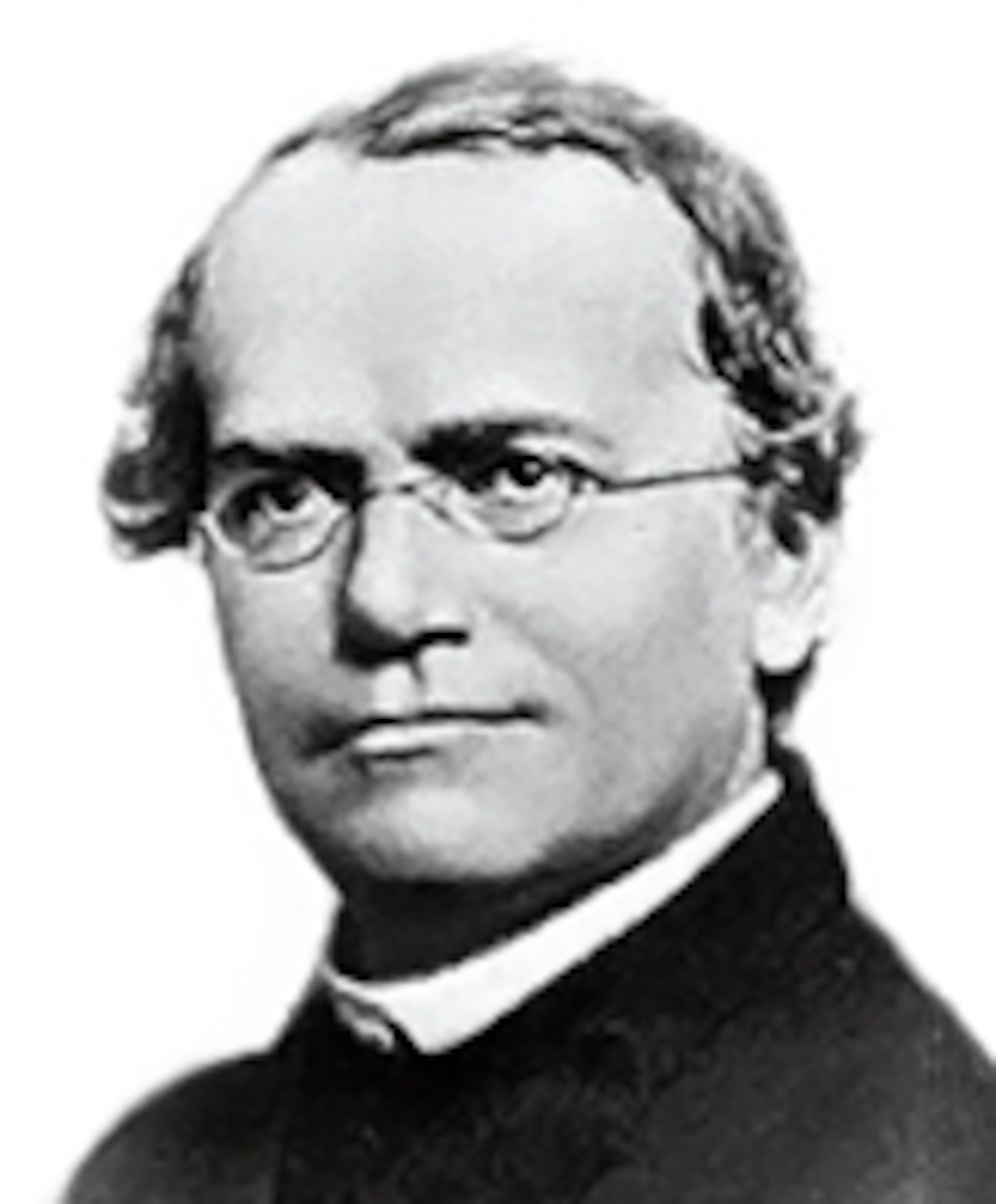

It’s hard to think of a scientist whose reputation is more squeaky-clean than the shy Austrian monk Gregor Mendel. His story invariably begins in the abbey garden, where from 1856 to 1863 he bred thousands of pea plants and painstakingly counted how traits pass from one generation to the next.

His data showed that many features are inherited in predictable ratios: dominant traits, like round seeds, are passed to three out of four daughter plants, whereas recessive ones, like wrinkled seeds, go to just one in four. Mendel discovered how genes work before anybody knew that they existed.

The ideas behind Mendel’s experiments are sound, but according to an infamous statistical analysis, the good friar fudged data to fit his pre-existing notions. In 1936, R.A. Fisher of University College London argued that Mendel’s tallies of traits — claiming, for instance, that 5,474 daughter seeds were round and 1,850 wrinkled — were too spot-on for the expected 3:1 ratio to be true. “The data of most, if not all, of the experiments have been falsified so as to agree closely with Mendel’s expectations,” Fisher wrote. In other words: Mendel was either presenting a choice subset of his data, or cooking the books outright.

A few years ago, statisticians in Portugal re-analyzed Mendel’s data and Fisher’s calculations, and suggested that Mendel was guilty of an unconscious and systematic bias, rather than fraud*. Whether Mendel cheated or not, there’s no question that fudges and mistakes and transgressions happen in science. So, fellow science-lovers, should we be worried about this?

Scientific misconduct has existed since the beginning of science — to surprisingly little fanfare. The scientific establishment tends to brush these cases under the rug with the claim that science is self-correcting. The argument goes something like this. If, as in Mendel’s case, fudged data uncovers or bolsters a real phenomenon, then there’s no harm done. Outright fraud is rare, and its impact, if any, is short-lived. If an experiment can’t be replicated, then its refutation will soon be published, and the original, flawed idea fade away into obscurity.

That’s certainly a comfortable position. Too bad it’s not exactly true. The scientific record is not so easily corrected.

A fascinating essay went up last week on Retraction Watch, a blog that tracks retractions, or the formal withdrawals of scientific studies from journals. For a scientist, retracting a study is kind of like a kid writing VOID on top of a birthday check. Except, with many retractions, it’s more like voiding a check long after the money’s been deposited and spent. Once a study enters the scientific literature, it gets read by many people and cited in many other studies. If it’s retracted a few years later, no one’s likely to notice. The check’s already cleared.

So back to this new essay. In it, cancer biologist David Vaux recounts when, in 1995, a new paper on organ transplantation came out in the prestigious journal Nature. Vaux was so impressed by the results that he wrote about them in a commentary (which Nature calls a “News and Views”) for the same issue of the journal. And after that, his lab started working on the same biological process. “Little did we know,” Vaux writes, “that instead of providing an answer to transplant rejection, these experiments would teach us a great deal about editorial practices and the difficulty of correcting errors once they appear in the literature.”

Long story short, Vaux’s team was not able to replicate the paper’s results. They tried, and failed, to publish their rebuttal in Nature. It was published in another journal, and within a couple of years, another group published a similar rebuttal in Nature Medicine. But still, Nature wouldn’t budge. Fed up, Vaux made a somewhat crazy move: He retracted his own News and Views piece. Go read his essay to get his full account. His retraction appeared in 1998, and still no one has replicated the other team’s findings.

This is not an isolated incident. Retraction Watch’s raison d’être is based on that fact. Some of the many comments on the post are also telling:

Peer007: I feel Vaux’s pain. I spent two years and four submissions before Nature was willing to publish a piece of correspondence highlighting major technical problems in a Nature paper. As both us and the original authors agreed on the technical problems (but differed in how they affect the interpretation of the experiment), this should have been a much less painful experience. Nature simply was not interested in setting the record straight. Shameful.

littlegreyrabbit: What you describe is not rare or unusual in the slightest, it is what many excellent and productive careers are built on. Don’t upset the apple cart.

arthurdentition: It seems like high impact Journals today are in the same position as the catholic church in recent years with regard to sexual abuse…Why can’t these journals get that and start respecting their target audience; rational, highly educated and articulate adults who know that it cannot all be true.

Peer007: The published literature is fallible. Putting fraud aside, mistakes will be made, controls omitted, variables unaccounted for, and incorrect conclusions will be drawn. It is inevitable. Journals, in my opinion, need to adjust their posture to account for the fact that all papers are a work in progress, and be more receptive to publishing corrections, correspondence, and (worse case scenario) retractions.

Right: Scientists are fallible. And in this age of increasingly intense competition for funding, researchers are arguably more likely to unintentionally mess up and/or cheat than ever before. Recent surveys have found that about 1 percent of all scientists seriously misbehave, and upwards of 20 percent commit questionable offenses, such as ignoring inconvenient data points, stealing others’ ideas, or publishing the same finding more than once.

But I don’t like it when scientists blame the federal budget for misconduct. It may be part of the problem, but not the solution. If we want the public to better understand the scientific process — and perhaps equally important, to trust the scientific process — then errors of any kind must be talked about and dealt with, swiftly and transparently.

Fortunately, scientific misconduct has been getting more attention in the past few years (hence the success of Retraction Watch). In 2010, a group of 340 individuals from 51 countries published the Singapore Statement on Research Integrity, the first international statement of principles meant to guide governments and institutions in setting sound policies. The leader of that group, Nicholas Steneck, director of the Research Ethics Program at the University of Michigan, says the most important audience for these efforts is the new generation of scientists. “It’s vitally important that we make young researchers aware of the problems, and also aware of where they’re likely to get pressures and how they can resist,” he told me in 2011.

R.A. Fisher, the statistician who put a spotlight on the inconsistencies in Mendel’s plant data, had a similar message for young scientists: Check, and re-check, and then scrutinize the data.

“In spite of the immense publicity it has received, [Mendel’s] work has not often been examined with sufficient care to prevent its many extra-ordinary features being overlooked,” Fisher wrote. “Each generation, perhaps, found in Mendel’s paper only what it expected to find.”

—

*Update: Others have also questioned the attacks against Mendel. On Twitter, Leonid Kruglyak pointed me to this example from 2007: http://www.genetics.org/content/175/3/975.full.