Here is the story of how simple video-game creatures evolved a memory.

These simple creatures were devised by a group of scientists to study life’s complexity. There are lots of ways to define complexity, but the one that they were interested in exploring has to do with how organisms behave.

Every creature from a microbe to a mountain lion can respond to its surroundings. E. coli has sensors on its surface to detect certain molecules, and it processes those signals to make very simple decisions about how it will move. It travels in a straight line by spinning long twisted tails counterclockwise. If it switches to clockwise, the tails unravel and the microbe tumbles.

A worm with a few hundred neurons can take in a lot more information from its senses, and can respond with more behaviors. And we, with a hundred billion or so neurons in our brains have a wider range of responses to our world.

A group of scientists from Caltech, the University of Wisconsin, Michigan State University, and the Allen Brain Institute wanted to better understand how this complexity changes as life evolves. Does life get more complex as it adapts to its environment? Is more complex always better? Or–judging from the abundance of E. coli and its fellow microbes on the planet today–is complexity overrated?

There are two massive problems with trying to answer these questions. One is that it’s hard to run an experiment on living things to watch them evolve different levels complexity. The other is that it’s difficult to measure that complexity in a precise way. Simply counting the number of neurons in a brain isn’t good enough, for example. If a hundred billion neurons are joined together randomly, they won’t generate any useful behavior. How those neurons work together matters, too.

There are more precise ways to think about this complexity. William Bialek of Princeton has proposed that complexity is a measurement how much of the future an organism can predict from the past. Giulio Tononi of the University of Wisconsin has proposed the complexity is a measure of how many parts of a brain can separately process information, and how well they combine that information into a seamless whole. (I wrote more about Tononi’s Integrated Information Theory in the New York Times.)

Both Bialek and Tononi have laid out their theories in mathematical terms, so that you can use them to measure complexity in terms of bits. You can say the complexity of a system is precisely 10 bits. You don’t have to just throw up your hands and say, “It’s complicated.”

Unfortunately, there’s still a catch. As powerful as these theories may be, they only allow scientists to calculate the complexity of a brain (or any other information-processing system) if they can measure all the information in it. There are so many bits of information flooding through our brains, and in such an inaccessible way, that it’s pretty much impossible to actually calculate their complexity.

Chris Adami of Michigan State and his colleagues decided to overcome these hurdles–of observing evolution and measuring information precisely–by programming a swarm of artificial creatures which they dubbed animats.

To create animats, the scientists first had to create the world in which they would struggle to survive, reproduce, and evolve. The scientists put them in a maze made of a series of walls. To move forward, the animats had to crawl along each wall to find a doorway. If they passed through the doorway, they could move forward to the next wall, where they could search for a new door. The animats that traveled through the most walls were then able to reproduce.

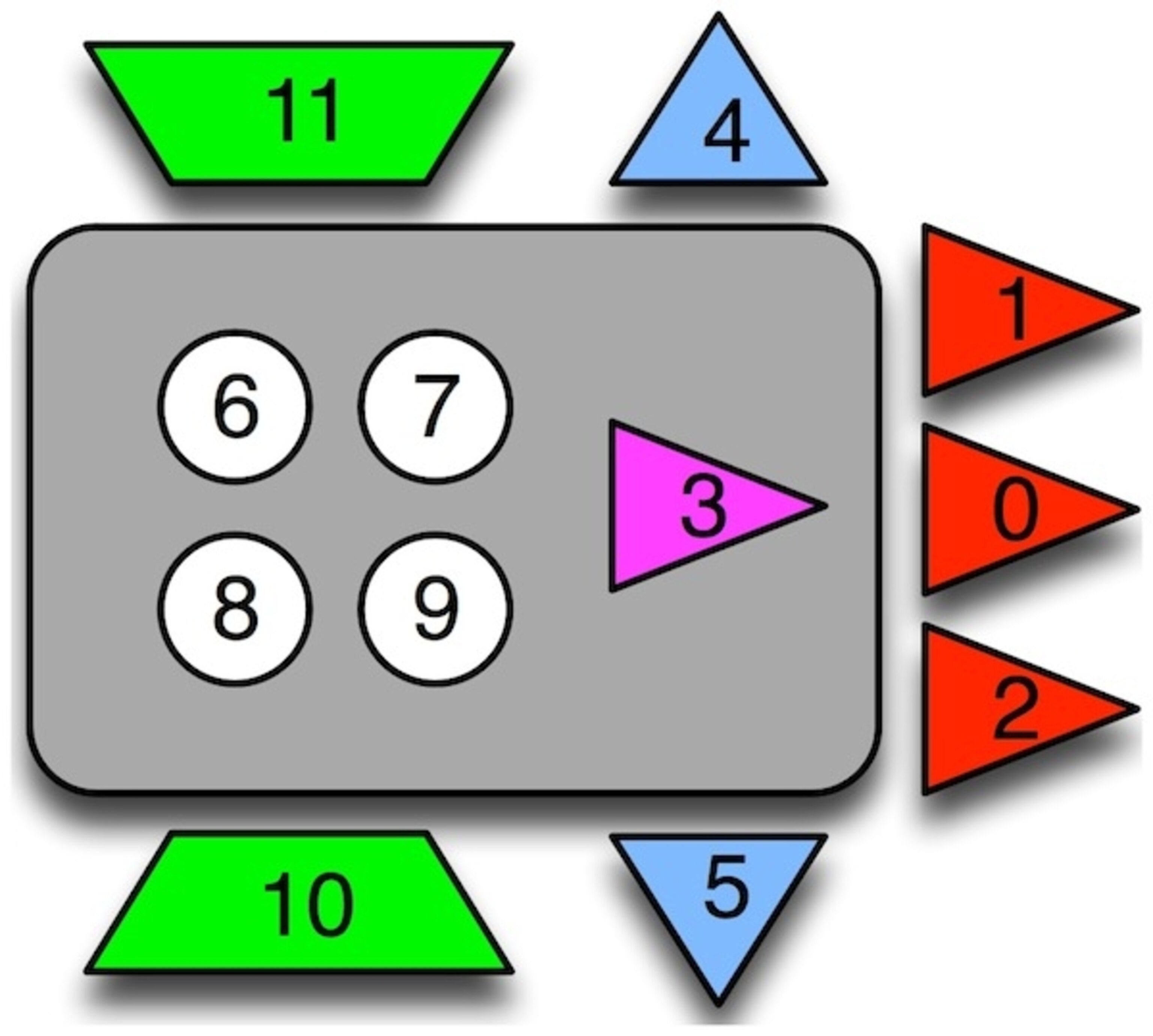

Here’s a diagram of the animat’s anatomy:

The red triangles, marked 0 through 2, are simple eyes. All they do is sense whether they are next to an obstacle or not. The pink triangle marked 3 is a sensor that senses whether it’s in a doorway or not. Sensors 4 and 5 are collision detectors that sense whether the animat has crashed into the upper or lower borders of the maze. The information that these senses register is as simple as can be: they’re either on or off.

That information–on or off–flows from the senses to the animat’s brain–the circles marked 6, 7, 8, and 9. Each sense may be linked to one circle, or two, or all of them. The links may be strong or weak. If an eye has a strong connection to one of the circles, it may flip every time the eye senses an obstacle. A weak connection may mean that it only flips a quarter of the time the eye sees something. The parts of the brain can be linked to each other, too, helping to switch each other on and off.

Finally, the animat has legs, the green trapezoids marked 10 and 11. The brain can send signals to the legs, as can the sensors. The legs can respond in one of four ways–move left, move right, move forward, or do nothing.

To launch their experiment, the scientists created 300 animats with randomly generated instructions for how each part of their body worked. They then dropped each animat into an identical maze and run their programs for 300 steps.

In those 300 steps, a lot of the animats just meandered up and down their first walls, making no progress. At the end of the run, the scientists grabbed the 30 animats that had gone the furthest and let them reproduce.

Each animat got to produce ten new offspring. Their offspring inherited the same code as their parent, but each position in the code had a small chance of mutating. These mutations could strengthen or weaken a link between an eye and a part of the brain, or could add an entirely new link. The parts of the brain could change the signals they sent to each other, or to the legs. Again, the scientists didn’t program in changes that would make the animats faster. The mutations dropped into the animat genomes at random.

The scientists then set the animats into their mazes again and let 300 steps pass by. Once again, they picked the 30 that managed to travel the furthers to reproduce. This process is natural selection in its essence. Some organisms have more offspring thanks to inherited variations in their genes, and new variation can arise through mutations. Over many generations, this process can spontaneously change how organisms work.

One of the luxuries of digital evolution is that you can let it run practically ad infinitum. The scientists let the animats reproduce for 60,000 generations. And in that time, the animats evolved into much better maze-travelers.

Here, in glorious Pong-era video, is an animat from the 12th generation. The top panel shows it moving through the full maze, while the lower left panel zooms in on the animat. The lower right panel shows the activity in the animat’s brain. Note how it takes its own sweet time meandering up and down the walls:

And here is an animat from the 60,000th generation. It moves with assurance and swiftness. The researchers were able to calculate the perfect strategy for an animat, and this evolved specimen had reached 93% of the ideal performance. (The early animat from the 12th generation only performed at 6%.)

You might be wondering what those red arrows are in the doorways. They’re clues. Each arrow tells which direction to go to find the next doorway. The doorway sensor can respond to those signals, but at the start of the experiment, the animats have no way to use the information.

But after thousands of generations, some of the animats evolved the ability to pick up the clues. Their brain evolved a wiring allow it to store the information they picked up in each doorway and use it guide their movements till they got to the next doorway–whereupon they kicked out the old information and recorded the information in the new doorway. Once the animats evolved this simple memory, their performance skyrocketed.

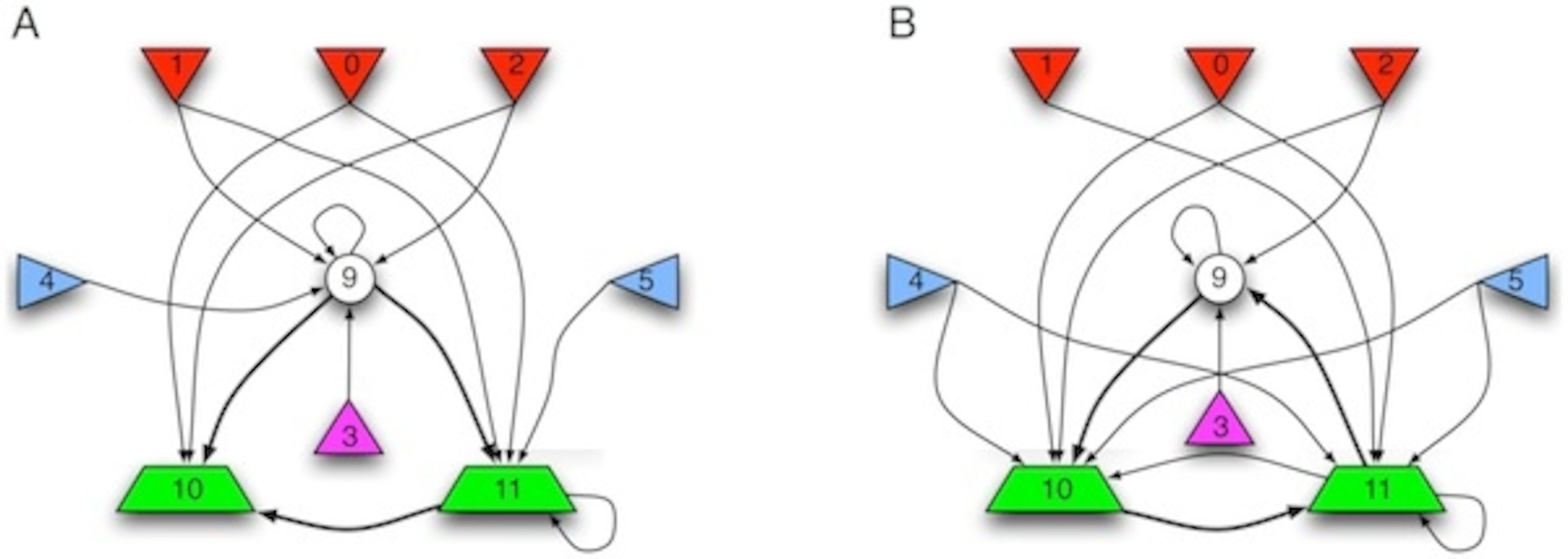

It’s startling to open the virtual skull of the animats and looking at what their evolved brains look like. Here are two different animats after 49,000 generations.

Both animats don’t even use three of the four parts of their brain–that’s why only the circle marked nine is shown in the diagrams. Each one has evolved different patterns of inputs and outputs. It’s hard to break apart the systems into individual circuits and say that they do anything in particular. The behavior of the animat emerges from the whole network.

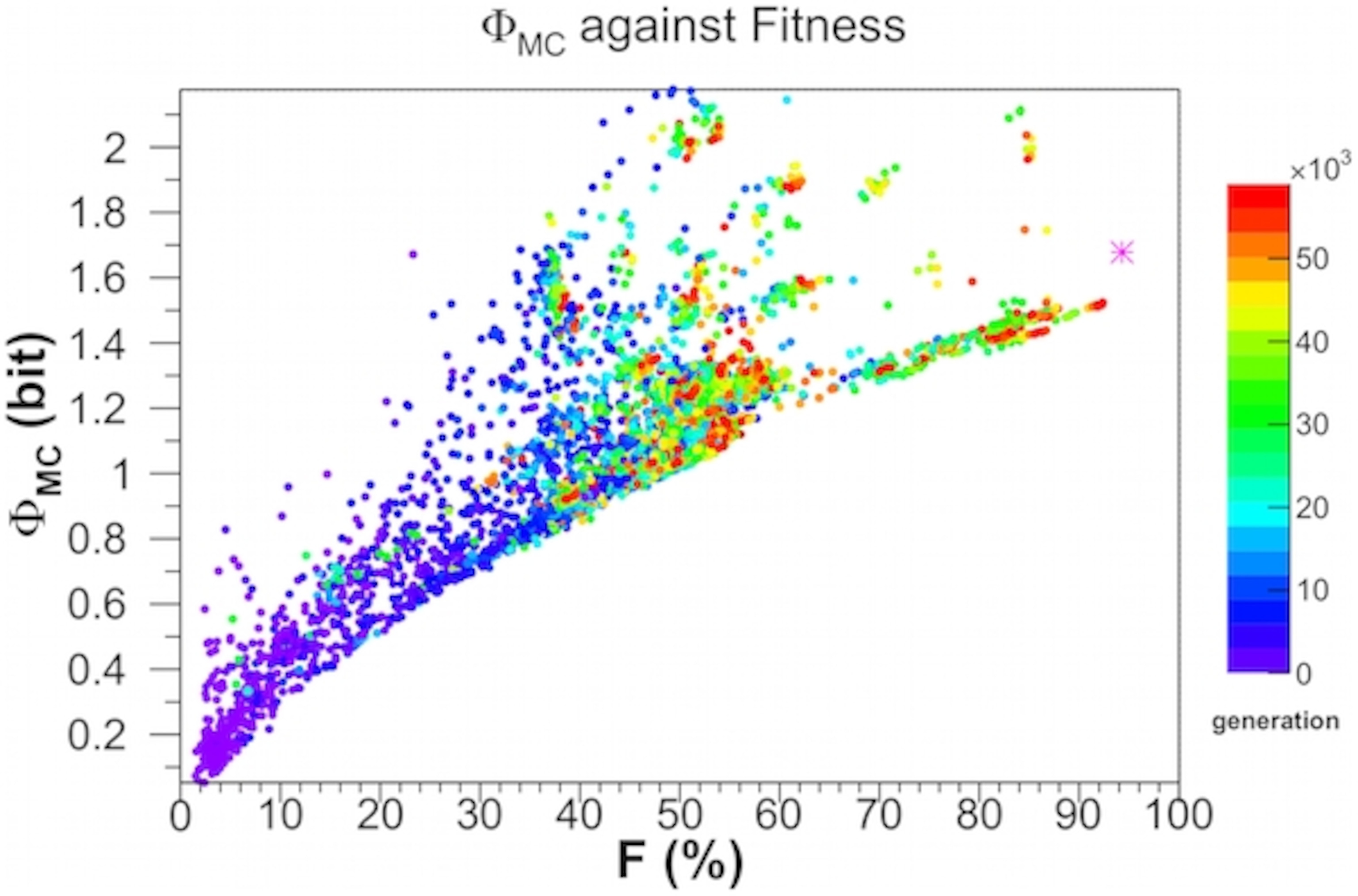

Thanks to the design of the experiment, the scientists could measure the complexity of the animats as they evolved–with a collossal amount of computing time. This graph shows the complexity of animats along the Y axis, using a measurement of Tononi’s integrated information. The color of the dots represents which generation each animat came from-blue is from the early generations, and red from the latest ones. And, finally, the X axis shows how fast the animats can travel, as a percentage of the highest possible speed an animate could possibly go.

There are two lessons from this graph, which can seem contradictory.

As the animats get better at getting through the maze, they get more complex. No 50% animat is less complex than a 20% animat.

To see whether selection really was essential to the rise of complexity, the scientists ran a test on some highly evolved, highly complex animats. At the end of each maze run, they didn’t pick out the top 10 percent of the animats as the parents of the next generation. They just picked 30 animats at random from all 300. After 1,000 generations without selection, the animats were pretty much hopeless, hardly able to find a single doorway. And their complexity crashed.

This research shows that an increase in complexity comes with adaptation–at least for animats. But look again at the graph. Look at the animats at any given level of fitness. Some of them are more complex than others. In other words, some animats can race along at the same speed as other animats that are twice as complex. All that extra complexity seems like a waste.

In the world of animats, evolving a better brain requires a minimal increase in complexity, so as to take in more information and make better use of it. Extra complexity doesn’t necessary make an animat better at traveling the maze, although it may provide the raw material for further evolutionary advances.

It would be interesting to see what would happen if some of the rules for animats were changed. In this experiment there was no cost to extra complexity–something that may not be true in the real world. The human brain makes huge demands of energy–twenty times more the same weight of muscle would. There’s lots of evidence that efficiency has a strong influence on the anatomy of our brains. Perhaps we might have more complex brains if we did. And if the animats had to pay a cost for extra complexity, they would evolve only the bare minimum. That’s an experiment I’d like to see. And don’t forget that arcade music….

Sources:

Edlund JA, Chaumont N, Hintze A, Koch C, Tononi G, et al. (2011) Integrated Information Increases with Fitness in the Evolution of Animats. PLoS Comput Biol 7(10): e1002236. doi:10.1371/journal.pcbi.1002236

Joshi NJ, Tononi G, Koch C (2013) The Minimal Complexity of Adapting Agents Increases with Fitness. PLoS Comput Biol 9(7): e1003111. doi:10.1371/journal.pcbi.1003111